Keyword Research Tool: Build a Full Content Plan With One Tool

A repeatable five-stage workflow that turns one keyword research tool into a prioritized, brief-ready editorial calendar — formula and template inside.

Most teams glue a keyword research tool to a clustering script, a brief generator, and a separate calendar app. The handoffs leak context, intent definitions drift between vendors, and the calendar fills with topics nobody can defend on a Monday standup. This guide collapses the mess into one repeatable workflow inside a single tool — from seed term to a prioritized, brief-ready editorial calendar — and shares the scoring formula, refresh cadence, and brief template our editorial team uses for every commercial cluster we publish.

Why build a content plan inside a single keyword research tool

Multi-tool stacks fail three ways. The same query gets tagged commercial in one product and transactional in another. Exports lose context — a CSV from a discovery tool will not carry the cluster IDs a brief generator expects, so someone rebuilds the join in a spreadsheet. And cost compounds: each seat is justified on its own, but together they devour the budget that should have gone to writers.

A consolidated workflow keeps one source of truth for intent, cluster membership, and priority. You stop reconciling exports and start arguing about content — the conversation that moves rankings. Google's helpful-content guidance keeps repeating that depth and originality outrank breadth, and depth comes from review time on the work, not on tooling glue.

What to look for in a keyword research tool

Tool roundups list every checkbox feature; only a handful matter for actually shipping a content plan. Use this list as a procurement filter, not a shopping wish list.

- Deep seed expansion. Tools that go four layers past the seed, not just synonyms. Commercial intent lives in long-tails like "best keyword research tool for solo consultants," not in the head term.

- Explainable intent labels. The tool should tell you why it tagged a query commercial — usually because the SERP is dominated by product pages or comparison content.

- Override-friendly clustering. The moment you cannot merge two clusters by hand, the algorithm owns your editorial direction.

- Exportable everything. CSV, JSON, and a stable API. Lock-in to a proprietary brief viewer is a tax on every future content audit.

- Search Console and BigQuery hooks. Real ranking data should grade the tool's predictions; Google's Search Console documentation is the simplest source of ground truth.

- Per-API-call billing for batch work. Per-seat is fine for editors. It is unfair for the strategist running fifty seed terms in a sprint.

- Versioned exports. When the algorithm shifts, you need to know which run produced which calendar slot.

None of that requires a $499 per-seat enterprise plan. A focused keyword research tool with a real API and clean exports beats the all-in-one suites for the workflow below.

Step-by-step workflow: seed keyword to full content plan

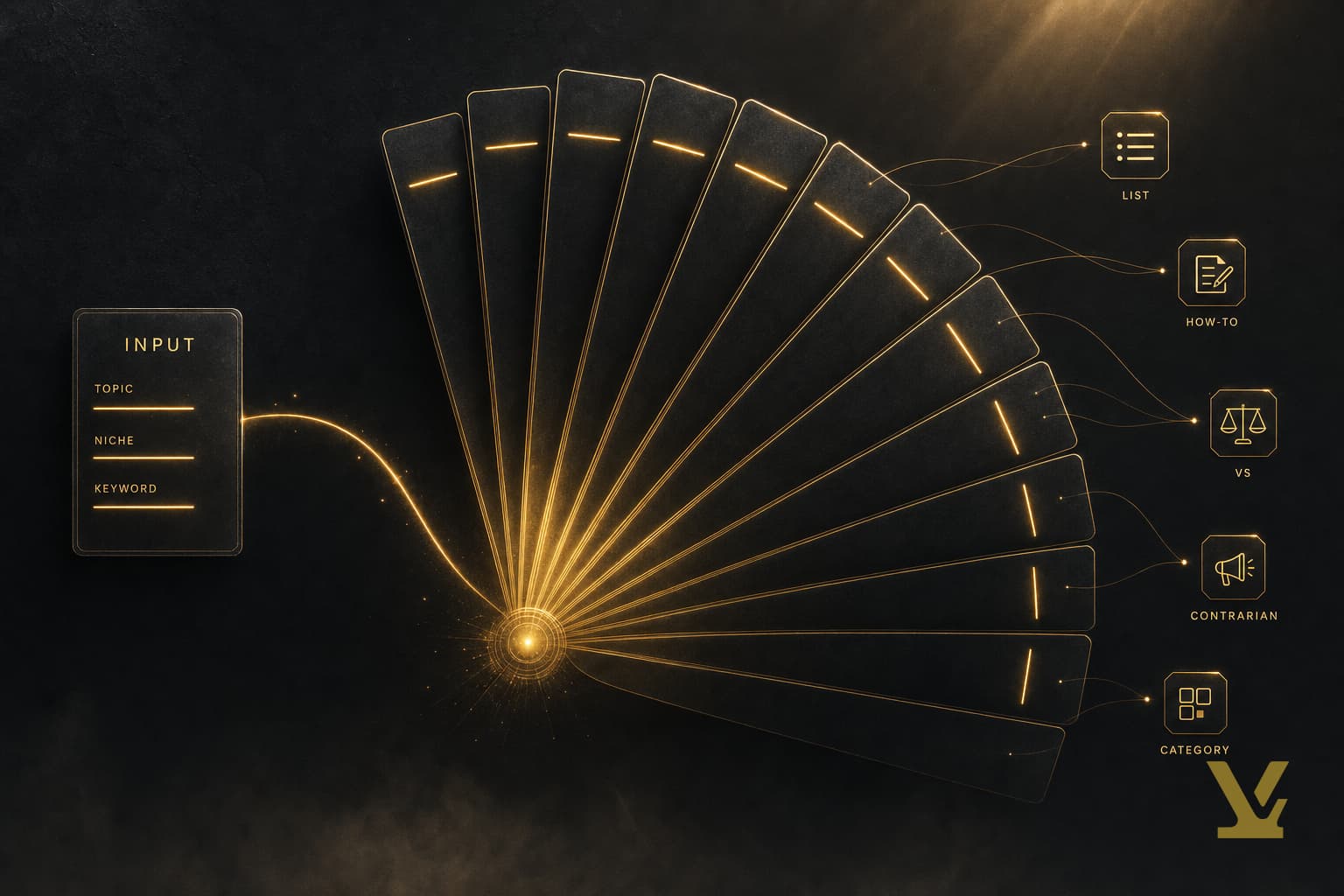

Here is the five-stage chain we run for every new commercial cluster. Each stage produces a discrete artifact you can review before moving on, which is what turns a one-shot keyword dump into a defensible content plan.

- Seed. Pick three to five seeds — your product category, primary use case, and one bottom-of-funnel buying phrase. Resist starting with a single broad head term.

- Expand. Run each seed through expansion until volume drops below your floor. We aim for ~150 raw queries per seed; below thirty means the seed is too narrow, above 400 means it is two seeds wearing a trenchcoat.

- Label intent. Tag every query informational, commercial, transactional, or navigational. Spot-check ten labels against live SERPs — if the tool says commercial and Google shows a Wikipedia result, the label is wrong.

- Cluster. Group queries that share both topical centroid and intent. Mixed-intent clusters become unfocused articles that rank for nothing.

- Prioritize and slot. Rank clusters with the scoring formula below, then map them to calendar slots. Every slot needs an owner and a draft date.

The artifact at the end of stage five is one file: cluster ID, primary keyword, intent, score, owner, draft date, target slot. That is your editorial calendar.

Example workflow using VarynForge (annotated outputs)

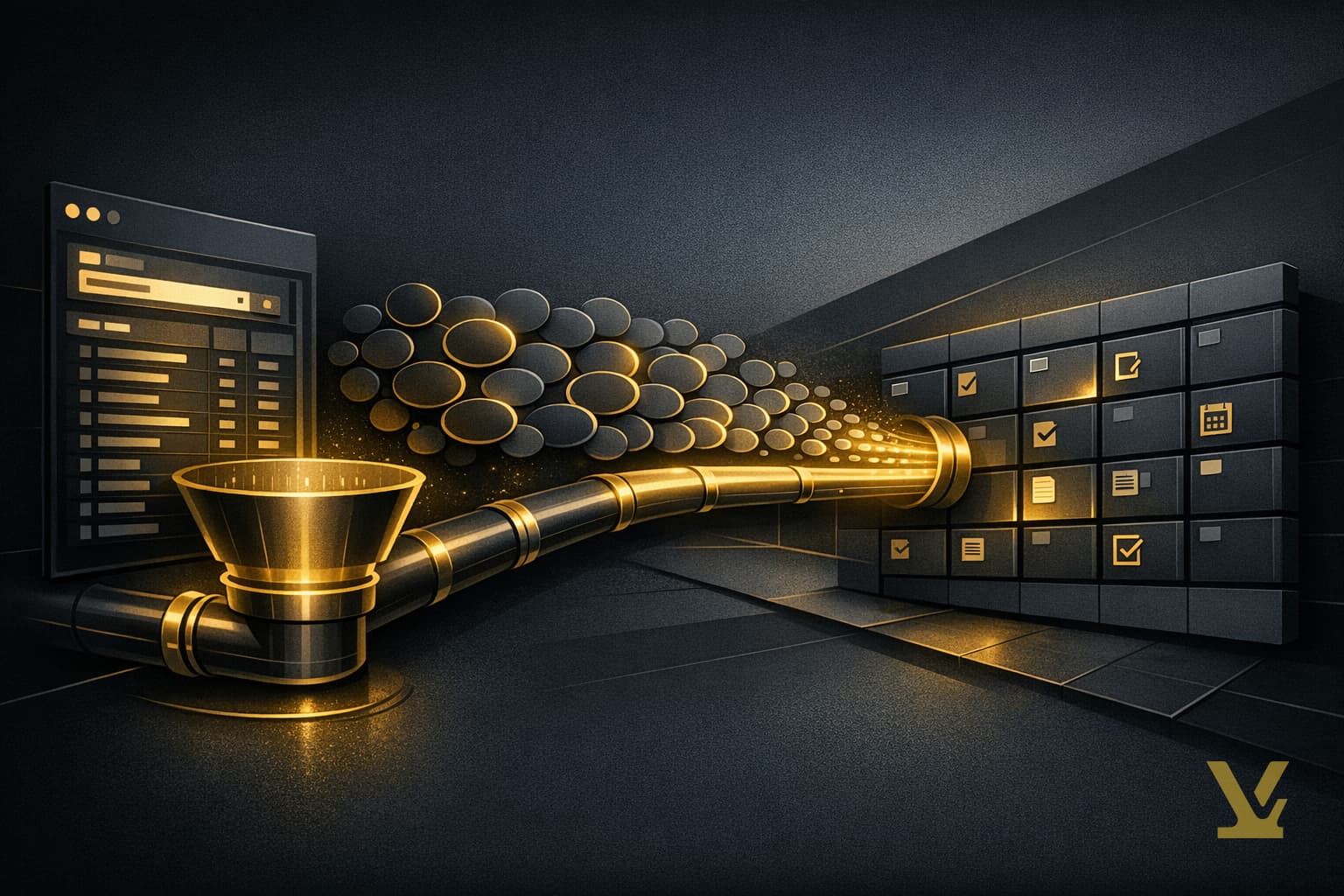

Inside VarynForge, the five stages collapse into three screens. Drop seeds into the discovery panel; expansion runs while you review intent labels for the first batch; the clustering view drops you into a draggable priority board. The export is a CSV plus a JSON manifest that mirrors the brief schema your CMS expects, so the calendar slot links to the brief, the brief links to the cluster, and the cluster links back to the original seed. No manual joins, no orphan rows.

On a different stack, replicate the manifest pattern by hand: every export carries the cluster ID and parent seed in a stable column. The third refresh cycle is when this pays off — diffing this month's calendar against last month's takes seconds instead of an afternoon.

Cluster keywords and prioritize topics with a 1–5 IRE score

Clustering is where most one-tool workflows quietly fail. Every tool either over-clusters — collapsing fifteen topic angles into one bloated mega-cluster — or under-clusters, leaving eighty single-query "topics" no writer can scope. Manual review on the first pass is non-optional.

For prioritization, we use one formula we call IRE — Intent × Reach ÷ Effort. Score each cluster from 1 to 5 on each axis:

- Intent (1–5): distance from the dominant intent to your conversion event. A "buy" query scores 5, a generic "what is" scores 2.

- Reach (1–5): combined monthly volume across the cluster, bucketed against your historical traffic floor.

- Effort (1–5): SERP difficulty plus content depth plus asset overhead. A long technical tutorial scores 5; a short FAQ scores 1.

Multiply intent by reach, divide by effort. Sort descending. Ship the top decile first, batch the next two into the following sprint, shelve the long tail. IRE beats sorting on raw difficulty because difficulty alone punishes high-intent commercial terms — exactly the terms worth losing money on at first because they convert.

Guardrail: review every 5/5/1 cluster (high intent, high reach, trivial effort) for cannibalization risk. Suspiciously easy commercial wins usually mean you already have a thin page targeting the cluster. Merge or rewrite first — see our true-cost buyer's guide for the math on why duplicate intent is the most expensive editorial mistake.

Turn clusters into a calendar of repeatable content briefs

A calendar is a contract: by date X, person Y publishes article Z targeting cluster C. The keyword research tool produces the cluster; the calendar enforces the contract. Map clusters to slots in priority order, then assign a content format — pillar, supporting, comparison, FAQ, or update — based on the dominant intent and SERP layout. If the top-ranking pages are listicles, ship a listicle; fighting the SERP format is a slow way to lose.

Two scheduling rules save weeks of cleanup. First, never schedule two articles from the same cluster less than 30 days apart — the second eats the first one's link equity before it finishes indexing. Second, every supporting article links back to the pillar on publish day.

Content brief template and export checklist

Every brief should carry the same nine fields, no more, no less. The discipline matters more than the schema:

- Cluster ID and parent seed (stable join keys to the keyword export).

- Primary keyword and 3–5 supporting variants (the writer should not have to look these up).

- Dominant intent and SERP format (listicle, tutorial, definition, or comparison).

- Target word count and image count (roughly one image per 400 words).

- Required H2s and FAQ questions (pulled from the cluster's "people also ask" extraction).

- Internal link targets (named pages with anchor suggestions).

- Two to three primary citation sources (Google, W3C, vendor docs — never another blog).

- Owner, reviewer, draft date.

- IRE score and the slot date (closes the loop with the calendar).

Export every brief as Markdown for the writer and JSON for the CMS. The JSON manifest is what lets a future audit answer "which keyword cluster produced this article" in one query.

Common mistakes, troubleshooting, and refresh cadence

The same five mistakes show up across every consolidated workflow we have audited. Each is preventable with a small process change.

- Sorting on raw volume. Volume is a vanity number until you weight it by intent and conversion. Use IRE, not search volume.

- Static cluster trees. Clusters drift as the SERP shifts. A cluster that was commercial last quarter may be navigational now because Google added a knowledge panel. Re-label intent at every refresh.

- Over-reliance on the tool's default difficulty score. Every tool's difficulty is a black box. Pair it with a manual SERP review on the top three clusters before you commit a sprint.

- Ignoring Google Trends for cyclical clusters. A cluster that looks flat year-on-year may have a sharp seasonal peak. Schedule the publish for six weeks before the peak, not during it.

- Refreshing too rarely or too often. Quarterly is too slow for fast-moving industries; weekly is too noisy and burns reviewer attention. Monthly plus an event trigger — algorithm update, product launch, competitor publishing spree — is the cadence we have settled on after two years of iteration.

When in doubt, log every refresh decision in a short changelog tied to the calendar. Future-you debugging a ranking dip will thank present-you for the timestamp.

Measure success and iterate the plan

A content plan is a hypothesis: that the chosen clusters, prioritized in this order, will produce the conversions you forecast. Track three numbers per cluster after publication: indexed-page count, organic clicks (Google Search Console), and assisted conversions in your analytics product. After 90 days, compare actual versus forecast IRE; clusters that under-deliver get a rewrite or a deprecation, not a "we'll see" excuse.

Feed the comparison back into the next refresh: re-tune the intent and effort weights for any cluster category that systematically over- or under-delivered. Two iterations are usually enough to stabilize. After that, the keyword research tool stops being a research tool and starts being the operating system for your content team.

Frequently Asked Questions

Can a single keyword research tool replace a full SEO stack?

For discovery, clustering, prioritization, and briefs — yes. For technical crawls, log analysis, and rank tracking, you still need separate tools. The point is to remove glue between editorial stages, not to pretend a keyword tool is also a JavaScript renderer.

Which tool metric should I prioritize beyond search volume?

Intent fit, then cluster reach, then SERP difficulty — in that order. Volume is a multiplier, not a ranker. A cluster with a tenth the volume but five times the intent fit will out-convert and out-compound the bigger one.

How often should I refresh the content plan?

Monthly works for most teams, with event-triggered refreshes on top. Set a reminder for the first business day of every month and pre-commit to refreshes within 72 hours of any documented Google ranking system update or major competitor launch. Weekly is overkill; quarterly is too slow.

Do I still need spreadsheets if my tool exports JSON?

Spreadsheets stay useful for one-off prioritization conversations with stakeholders who do not live in your tool. Treat them as scratch surfaces. Anything driving editorial decisions belongs back in the keyword research tool, version-tagged.

How VarynForge fits in

VarynForge is a content-opportunity service built around the same single-tool pattern this guide describes: discovery, clustering, IRE-style prioritization, and brief export from one workspace, with cluster IDs and parent seeds threaded through every artifact. Useful when the five-stage workflow already runs on spreadsheets and the reconciliation tax is the bottleneck. See current VarynForge pricing.

Key Takeaways

A consolidated keyword research tool is the discipline of one source of truth for intent, clusters, and priority. The five-stage workflow plus the IRE scoring formula plus the monthly refresh cadence is the smallest system that survives contact with a real publishing schedule. Adopt the brief template, enforce the calendar contract, and audit the workflow once a quarter against actual ranking data.

Further Reading

- Best keyword research tool for SEO

- How to do keyword research for topic clusters

- Best AI SEO tools: practical uses and human checks

- Semrush Keyword Magic Tool

Sources

Frequently asked questions

Can a single keyword research tool replace a full SEO stack?

For discovery, clustering, prioritization, and briefs — yes. For technical crawls, log analysis, and rank tracking, you still need separate tools. The point is to remove glue between editorial stages, not to pretend a keyword tool is also a JavaScript renderer. Multi-tool stacks fail three ways: the same query gets tagged commercial in one product and transactional in another, exports lose context across cluster IDs, and per-seat costs compound. A consolidated workflow keeps one source of truth for intent, cluster membership, and priority.

Which tool metric should I prioritize beyond search volume?

Intent fit, then cluster reach, then SERP difficulty — in that order. Volume is a multiplier, not a ranker. A cluster with a tenth the volume but five times the intent fit will out-convert and out-compound the bigger one. Use the IRE formula: Intent times Reach divided by Effort. Score each cluster one to five on each axis, multiply intent by reach, divide by effort, sort descending. IRE beats sorting on raw difficulty because difficulty alone punishes high-intent commercial terms — exactly the terms worth losing money on at first because they convert.

How often should I refresh the content plan?

Monthly works for most teams, with event-triggered refreshes on top. Set a reminder for the first business day of every month and pre-commit to refreshes within 72 hours of any documented Google ranking system update or major competitor launch. Weekly is overkill; quarterly is too slow. Monthly plus an event trigger — algorithm update, product launch, competitor publishing spree — is the cadence we settled on after two years of iteration. When in doubt, log every refresh decision in a short changelog tied to the calendar.

Do I still need spreadsheets if my tool exports JSON?

Spreadsheets stay useful for one-off prioritization conversations with stakeholders who do not live in your tool. Treat them as scratch surfaces. Anything driving editorial decisions belongs back in the keyword research tool, version-tagged. The artifact at the end of stage five of the workflow is one file: cluster ID, primary keyword, intent, score, owner, draft date, target slot. That is your editorial calendar. The JSON manifest is what lets a future audit answer which keyword cluster produced this article in one query.

What does the IRE scoring formula look like in practice?

Score each cluster one to five on three axes. Intent measures distance from the dominant intent to your conversion event — a buy query scores five, a generic what is scores two. Reach measures combined monthly volume across the cluster, bucketed against your historical traffic floor. Effort combines SERP difficulty plus content depth plus asset overhead — a long technical tutorial scores five, a short FAQ scores one. Multiply intent by reach, divide by effort, sort descending. Ship the top decile first, batch the next two into the following sprint, shelve the long tail.

What goes in a content brief that comes out of this workflow?

Every brief carries the same nine fields. Cluster ID and parent seed for stable join keys to the keyword export. Primary keyword and three to five supporting variants so the writer does not have to look these up. Dominant intent and SERP format like listicle, tutorial, definition, or comparison. Target word count and image count of roughly one image per 400 words. Required H2s and FAQ questions pulled from the cluster's People Also Ask extraction. Internal link targets with anchor suggestions. Two to three primary citation sources. The IRE score and the slot date that closes the loop with the calendar.