Types of Search Intent: A Vector Framework for Content Teams

Stop guessing search intent. Score every query as a 4-axis vector from SERP features in under a minute, then map the dominant and runner-up to format.

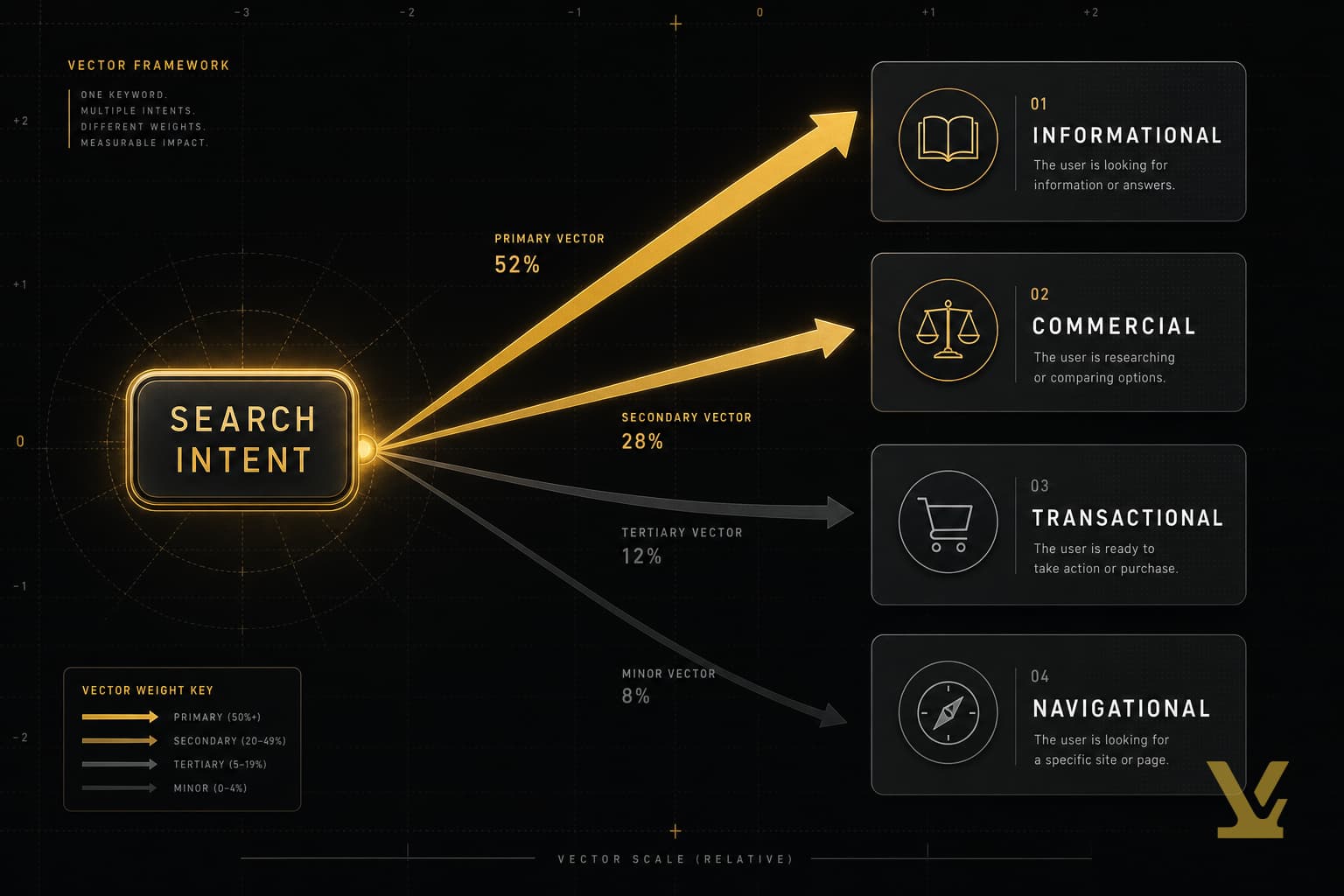

Most teams still treat search intent as a single label glued to a keyword: informational, navigational, commercial, or transactional. That model is wrong often enough to break content plans. Mid-tail SERPs routinely mix feature types — a featured snippet next to shopping ads next to a local pack — meaning Google itself sees the query as partly informational, partly commercial, partly local at the same time.

This guide replaces the single-label model with a four-component intent vector you can score in under a minute from the live SERP. The dominant component decides the article's primary format. The runner-up component decides the section you must include to win the SERP. That second move is what most competitor articles miss, and it is why correctly classified pages still fail to rank.

What is search intent — a definition for content teams

Search intent is the goal a person is trying to satisfy with a query. Google's Search Quality Rater Guidelines tell raters to score intent before grading content quality — which is why an "amazing" article can rank below a thinner page that matches intent more cleanly.

The operational definition for content teams is narrower: search intent is the smallest set of jobs a single page must satisfy to keep a searcher from clicking back to the SERP. Three implications follow:

- Intent is plural. Most queries carry a primary job and at least one supporting job. Treat it as a vector, not a label.

- Intent is observable. The SERP layout — features, ad slots, panel types — is Google's published guess at the vector.

- Intent shifts. AI Overviews and seasonal patterns can change the vector for the same query within months, so the score has a shelf life.

Types of search intent: the four core components

Every classic taxonomy from Andrei Broder's 2002 web-search paper through Yoast and Semrush leans on the same four components. Treat them as the axes of the vector, not as mutually exclusive bins:

- Informational — knowledge-seeking queries like "what is search intent" or "structured data examples". Featured snippets, PAA, and knowledge panels signal high I-weight.

- Commercial — comparison queries like "best CRM for agencies" or "Notion vs Coda". Listicle SERPs and merchant rating panels signal high C-weight.

- Transactional — ready-to-act queries like "buy nikon zf" or "download obsidian". Shopping ads and product pack carousels signal high T-weight.

- Navigational — destination-driven queries like "varynforge login" or "github status". A brand sitelink block at position one signals high N-weight.

Search Engine Land argues there are now more than four intent types, citing micro-intents like local-pack and lyrics queries. The vector view absorbs that critique: local and generative are not new axes — they are blends.

Score search intent: a 4-component vector from the SERP

The vector is I, C, T, N — each on a 0 to 3 scale. The score for each axis is the count of distinct SERP feature types Google ships for that intent class. Chrome plus a 30-second scroll is enough; no paid tool required.

Step 1 — open the SERP in incognito; set location to the audience country.

Step 2 — count feature types per axis, capped at 3. Featured snippet + PAA + knowledge panel push I. Shopping ads + merchant pack push T. Comparison listicles push C. Brand sitelinks push N.

Step 3 — identify the dominant and runner-up. The dominant axis dictates the primary format; the runner-up dictates the supporting section. Ties at the top mean a hybrid page.

Worked example. The query "keyword research tool" returns featured snippet (I), People Also Ask (I), an organic listicle of "best 12 tools" (C), a comparison table snippet (C), and one shopping ad (T). Counts: I=2, C=2, T=1, N=0. Dominant = I and C tied. Runner-up = T. The rubric says ship a hybrid explainer plus comparison with a transactional CTA strip — exactly the shape of the page that wins. Ahrefs published a similar SERP-feature method, but capped at a single label. Scoring all four axes surfaces the runner-up.

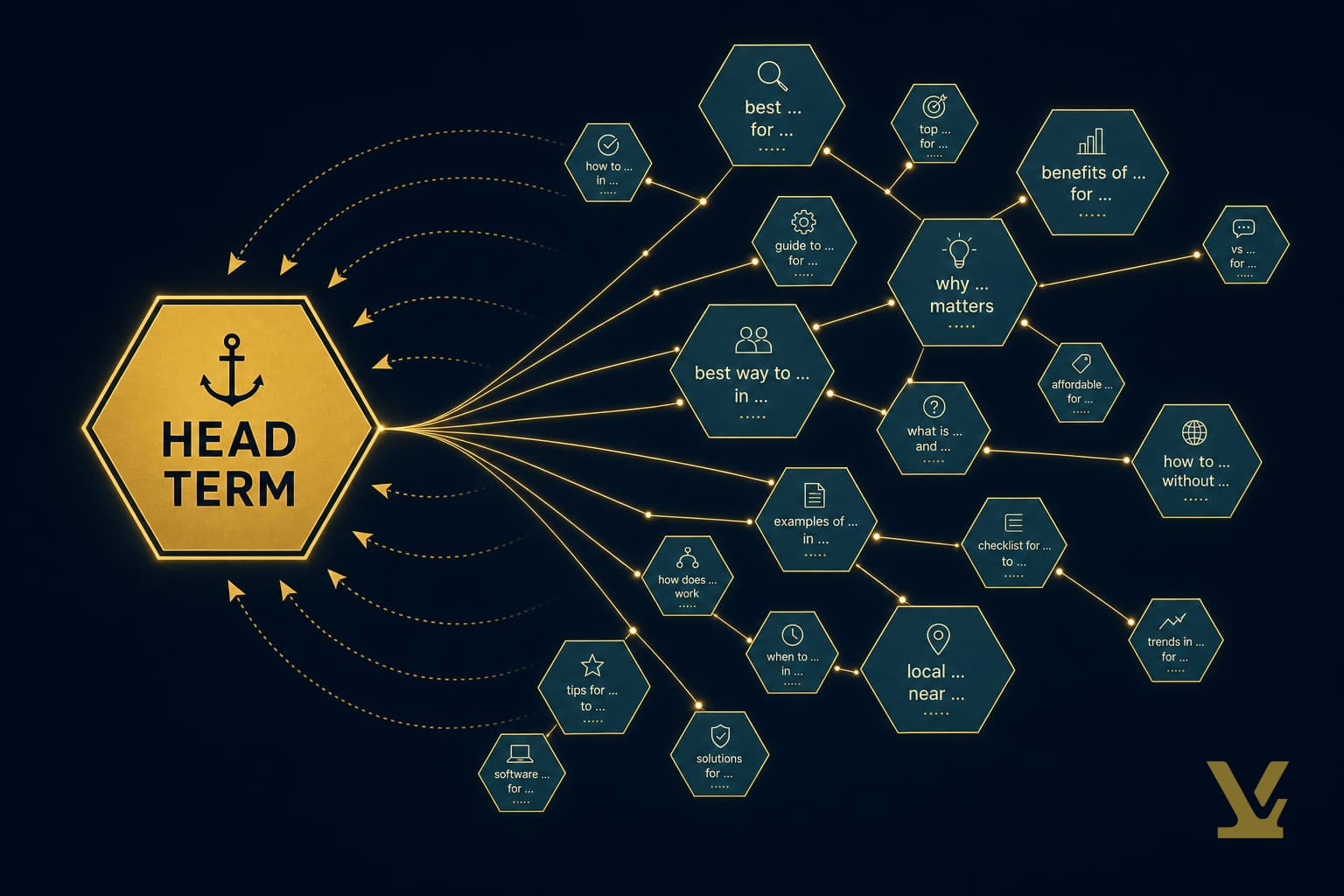

Search intent vs keywords: how to map queries to content types

A keyword is the surface form; the intent vector is the underlying job. Two queries with the same keyword can carry different vectors across location and season. The mapping that holds across cases is driven by the dominant axis, not the keyword string. Our Types of Keywords: Decision Tree for Intent guide is a useful companion when picking head, mid-tail, or long-tail variants for the same vector profile.

The mapping below is the minimum coverage every brief should pin before drafting.

- Dominant I (informational) → long-form explainer with a definition in the first 100 words and a structured-data FAQ block.

- Dominant C (commercial) → comparison page with at least one ranked table, a "best for" decision matrix, and per-tool pros and cons.

- Dominant T (transactional) → product or pricing page with schema markup, in-page CTAs every 250 words, and trust signals near the buy step.

- Dominant N (navigational) → a tight destination page with one canonical link and no keyword cannibalization. Often you do not write a new article — you fix internal links.

Map the intent vector to a funnel: from awareness to decision

The vector also explains funnel stage without a separate framework. Awareness queries are I-dominant with a small C tail. Consideration balances C and I and starts growing T. Decision queries are T-dominant with a small N tail (people verify the brand before "buy").

Use that drift to populate a content plan. Score the head, mid-tail, and a representative long-tail query for each cluster — the vector profile shifts predictably and the formats follow. Our topic cluster research workflow walks through the cluster step in detail.

Over-stuffing the awareness stage is a common error. AI Overviews now appear on the majority of I-dominant SERPs (Semrush, 2024). Awareness pages compete for citation slots inside Overviews, not blue links — write fewer, deeper pieces with structured citations.

Identifying intent in real queries: signals, modifiers, and SERP clues

Three sets of signals confirm or invalidate a vector score. Use them as cross-checks when the SERP is mixed.

- Modifiers — "how", "what", "why", "guide" lean I; "best", "vs", "alternatives" lean C; "buy", "price", "near me" lean T; brand names alone lean N.

- SERP feature mix — featured snippet + PAA + knowledge panel = strong I; shopping pack + product carousel = strong T; brand sitelinks at #1 = strong N.

- Page-type majority on page one — if 7 of 10 organic results are listicles, that is C; 7 of 10 vendor product pages is T; mixed means your vector has more than one component above zero.

When the three signal sets disagree, the SERP wins. Google's classifier is the only one whose vote affects rankings. The right SERP-analysis tools can automate the count if you are scoring more than 50 queries.

A reproducible workflow: from query to intent-matched brief

A five-pass loop a writer can run in under 15 minutes per brief. It produces three artifacts: a vector score, a brief outline, and a metadata block.

- Pull the target query and 5 to 7 secondary queries.

- Scan the SERP — incognito, correct geo, one scroll.

- Score the I, C, T, N counts; note dominant and runner-up.

- Shape the outline: H2 spine matches the dominant format; one H2 for the runner-up axis.

- Ship the brief: headline, meta, key questions, internal links, and the score itself.

Three copy-ready templates. Replace the bracketed slots with your specifics.

Headline — "[Topic]: [Promise tied to dominant axis] for [Audience]". Example: "Schema Markup: A Validation Checklist for Content Teams".

Meta description — "[One-sentence answer that satisfies the dominant axis]. [One sentence promising the runner-up coverage]." 150 to 160 chars; land the focus keyword in the first 60.

Brief skeleton — Title, vector score (I:_ C:_ T:_ N:_), dominant format, runner-up section name, 5 to 7 H2s, three required sources, and the conversion next-step. The brief is one page. Our content-plan walkthrough shows the same skeleton applied across an entire cluster.

Common mistakes, measurement, and a 2026 checklist

Three failure modes show up over and over in audits.

- Single-label thinking — writers stop at "informational" and ship an explainer for a query whose runner-up is C. Result: ranks position 6 to 10, never breaks into the comparison block.

- Vector inflation — assuming every query has all four components. Most have one dominant axis and one runner-up; the other two are zero. Padding dilutes the dominant axis.

- Stale scoring — scoring once at brief-time and never re-scoring. The vector drifts as Google adjusts SERP layouts.

Measurement should track three signals per page. Click-through rate from Search Console (proxy that title and meta hit the dominant axis); engagement rate from GA4 (proxy that the dominant-axis content delivers); and conversion rate on the in-page CTA (proxy that the runner-up was covered well enough to keep momentum). Aim for top-quartile CTR for the keyword's position bucket — Advanced Web Ranking puts the average position-1 organic CTR near 35% on desktop.

The checklist for 2026 briefs:

- SERP scored against I, C, T, N — not a single label.

- Dominant axis decides the primary format; runner-up has its own H2.

- AI Overview presence noted; if true, structured citations are required in the body.

- Title and meta description satisfy the dominant axis in the first 60 characters.

- At least one schema block (Article or FAQ) for I-dominant; Product for T-dominant.

- Internal links mirror the funnel vector drift from awareness to decision.

- Re-score quarterly; flag any query whose vector shifted by more than one point on any axis.

How VarynForge fits in

Scoring feature mixes by hand works for ten queries — not for a 200-keyword cluster. VarynForge automates the count: every brief in our content planner already carries an I, C, T, N score with dominant and runner-up axes flagged, so writers ship intent-matched outlines without scrolling SERPs themselves.

Frequently Asked Questions

What are the main types of search intent and how do they differ?

Informational queries seek knowledge; commercial queries compare options; transactional queries act on a purchase; navigational queries reach a known destination. The differences map to distinct SERP layouts and content formats. The vector model treats them as axes a query is scored against, not labels stamped onto it — most queries land on more than one axis.

How can I tell if a keyword has high search intent?

"High intent" usually means high commercial or transactional weight. Look for shopping ads, merchant carousels, comparison-listicle dominance, and modifiers like "best", "vs", "buy", or "near me". A SERP of 7-in-10 vendor pages plus a shopping pack scores T=2 or higher — that is what high transactional intent looks like in practice.

What's the difference between a keyword and search intent?

A keyword is the string a person types; search intent is the goal behind it. The same keyword can carry different vectors across geographies or seasons. Pin the vector first, then choose the keyword variant inside that profile.

Can search intent change over time?

Yes. Seasonal queries flip between I and T. AI Overviews changed the vector for many I-dominant queries between 2023 and 2025 — the runner-up axis got more important because the dominant slot was eaten by an Overview. Re-score quarterly for any page in your top 50 by traffic.

Further Reading

- The 6 Types of Search Intent (Including the New Generative AI Intent) — SE Ranking

- What Is Search Intent? How to Identify It and Optimize for It — Semrush

- There are more than 4 types of search intent — Search Engine Land

- What is search intent? — Yoast

- VarynForge Blog

Sources

- Google — Search Quality Rater Guidelines (PDF)

- Web search query — Wikipedia (covers Broder taxonomy, 2002)

- Semrush — SEO Statistics

- Ahrefs — Search Intent: The Overlooked Ranking Factor

- Advanced Web Ranking — Google Organic CTR Study

- Google Analytics 4 — Developer Documentation

Key Takeaways

Treat search intent as a vector, not a label. Score every target query against four axes — I, C, T, N — by counting SERP feature types in under a minute. The dominant axis decides the format; the runner-up decides the supporting section.

Audit your top 20 pages with the rubric this week. Where the runner-up axis has no section in the existing page, schedule a refresh — that is usually the cheapest ranking lift in your portfolio. The vector model does not replace the four-category taxonomy; it adds the second move that finally makes "match search intent" a reproducible workflow instead of a slogan.